AI Governance in Contact Centers: Why It Matters and How to Get It Right

AI Governance in Contact Centers: Why It Matters and How to Get It Right

Contact centers today run on AI. From AI powered bots handling first-contact queries to speech analytics scoring every call, AI technologies touch nearly every layer of the customer experience. But here is the uncomfortable truth: most organizations have deployed AI faster than they have learned to govern it. Responsible AI governance in contact center operations is no longer a nice-to-have compliance exercise. It is an operational discipline that determines whether your AI systems build customer loyalty or quietly erode it. If you are among the business leaders running a contact center with hundreds of human agents and thousands of daily customer interactions, governance is the foundation that keeps everything working the way it should.

What Does AI Governance Mean for Contact Centers?

AI governance is the set of policies, processes, and accountability structures that ensure AI systems in a contact center operate fairly, transparently, and within regulatory boundaries. In practice, this spans AI powered chatbots, voicebots, real time agent assist tools, intelligent call routing algorithms, speech analytics engines, and the emerging wave of agentic AI platforms that can take autonomous action on behalf of customers. These AI technologies in contact centers include customer-facing bots that handle routine inquiries, virtual agent systems that understand intent through natural language processing, and AI assistants that support human agents during live interactions by surfacing relevant information and suggesting next-best actions.

Think of it this way. When an AI powered bot misidentifies a customer's intent and routes them through three transfers before they finally reach the right agent, that is not just a CX failure. It is a governance failure. The system lacked the guardrails, escalation triggers, and oversight mechanisms that would have caught the problem before it compounded. Without effective governance, AI driven decisions like these go unchecked and erode the customer journey at every touchpoint.

Governance is not about slowing innovation down. It is about making sure contact center AI operates within defined trust boundaries, aligned with standards like the NIST AI Risk Management Framework, so your organization can scale AI confidently and responsibly. AI governance frameworks help ensure that AI systems operate safely, consistently, and responsibly at scale, enabling organizations to navigate regulatory pressures while maintaining confidence in their customer experience.

Why Contact Centers Need AI Governance Now?

AI adoption in contact centers is accelerating, but governance has not kept pace. Only about 25% of contact centers have successfully integrated AI automation into their daily operations, which means the majority are still figuring out how to manage what they have already deployed. That gap between AI deployment and oversight is where compliance risks live, and it is growing wider as more organizations adopt AI technologies without matching governance practices.

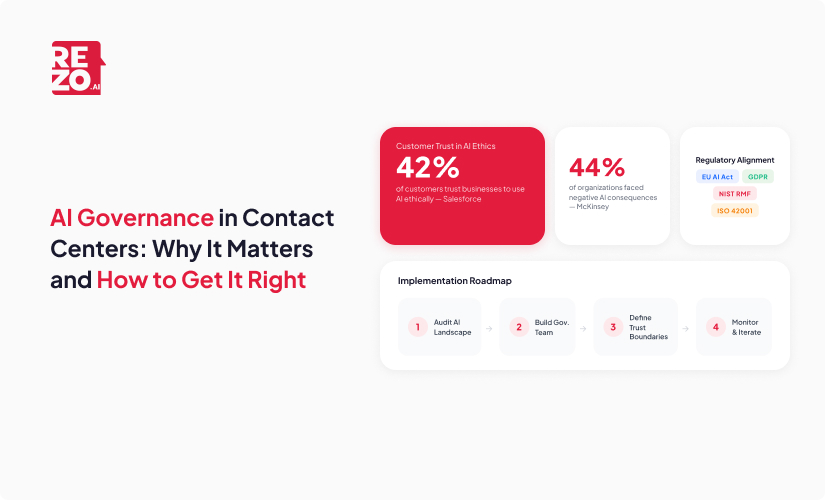

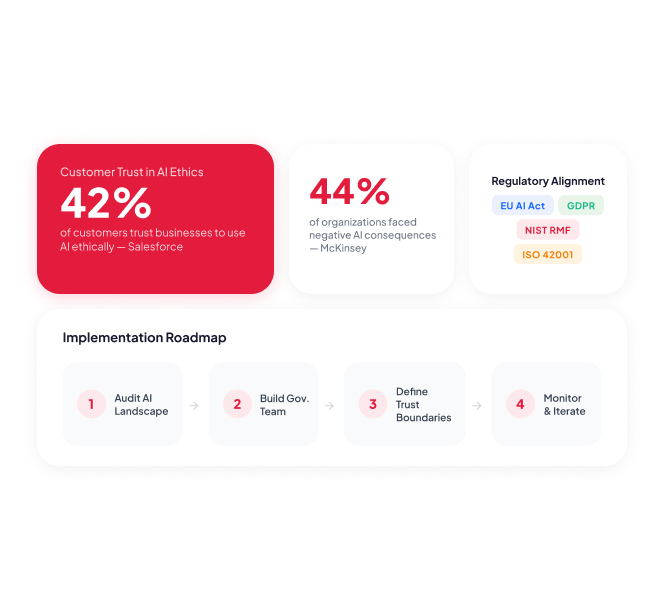

The data makes the urgency clear. McKinsey found that 44% of organizations have experienced negative consequences from AI deployment, ranging from inaccurate outputs to reputational damage and compliance issues. In a contact center handling thousands of customer conversations daily, even a small governance gap can compound quickly. A biased sentiment model that consistently flags certain customer demographics as "angry," an AI powered chatbot that hallucinates a refund policy that does not exist, or an agent assist tool that surfaces outdated compliance language: these are not hypothetical problems. They are happening now. Without proper AI governance, organizations risk operational inefficiencies, compliance violations, and damage to their brand reputation.

On the customer side, confidence is fragile. Salesforce data shows that only 42% of customers trust businesses to use AI ethically. For contact centers that serve as the primary touchpoint for customer relationships, that deficit translates directly into lower engagement with automated self service channels, higher escalation rates, and weakened brand perception. Many customers still prefer speaking with human agents, and when AI driven systems fail to meet customer expectations, those preferences only harden. Understanding how AI helps build loyalty and lasting relationships is essential for any CX leader navigating this landscape.

Looking ahead, the stakes only grow. Gartner predicts that agentic AI will autonomously resolve 80% of common customer service issues by 2029, shifting traditional contact centers toward AI-first resolution models. As AI shifts from assisting human agents to making autonomous decisions across the customer journey, governance becomes the difference between a system that builds loyalty and one that erodes it. More than 40% of AI initiatives could be abandoned by 2027 due to inadequate risk controls.

The regulatory landscape reinforces this urgency. The EU AI Act is now in enforcement, California's chatbot disclosure law took effect in January 2026, and FINRA has introduced dedicated GenAI guidance for financial services. Effective governance accelerates AI adoption by reducing uncertainty and establishing clear guidelines for data access and accountability, positioning it as a growth strategy rather than just a risk mitigation exercise. Contact centers that wait will find themselves playing catch-up with both regulators and customers.

What Are the Core Pillars of AI Governance in Contact Centers?

A practical AI governance framework for contact centers rests on five pillars. Each one maps directly to the workflows, risks, and regulatory requirements that CX leaders deal with every day.

Data Privacy and Security

Contact centers process sensitive customer data across voice recordings, chat transcripts, conversation logs, screen shares, and payment information. Governance must define exactly what data AI systems can access, how long it is retained, and who can review it. Data protection policies should require organizations to collect only the information strictly necessary for the AI platform's specific objective, adhering to data minimization principles.

For BFSI contact centers, this means aligning with PCI DSS for payment data and ensuring that AI driven call recordings comply with financial regulations. Healthcare contact centers must map AI data flows against HIPAA requirements. Across every industry, GDPR and CCPA provide the baseline, but governance needs to go further by establishing data minimization rules specific to each AI tool in your stack. Organizations using AI in contact centers should also implement strict end-to-end encryption for all customer interactions and document every internal and third-party AI tool, including its purpose and data sources.

Bias Prevention and Fairness

AI models trained on historical contact center data can inherit biases that are difficult to spot without active auditing. A speech analytics engine might score certain accents as lower confidence. A routing algorithm might deprioritize certain customer segments based on patterns baked into training data. A sentiment analysis model might misclassify cultural communication styles as negative.

Governance should mandate regular bias audits across large language models, intent classification, and customer segmentation algorithms. This means building diverse training datasets, running fairness tests across demographic groups, and creating clear remediation processes when bias is detected. Organizations should use automated AI tools to review 100% of interactions for compliance and bias, ensuring AI models remain accurate and ethical as they evolve. Utilizing explainable AI (XAI) models allows for auditing AI driven outcomes and helps mitigate risks associated with biased outputs and privacy breaches.

Transparency and Disclosure

Customers have a right to know when they are interacting with AI. Governance should define what to disclose, when to disclose it, and how, across every customer-facing channel: voice bots, chat, email, and SMS. Organizations should clearly inform customers when they are interacting with a virtual agent and provide easy self service options to escalate to a human agent at any point.

Internal transparency matters just as much. Human agents should understand how AI powered suggestions, quality scores, and predictive analytics are generated, so they can trust the AI tools they use and flag issues when something seems off. This transparency directly supports agent experience and boosts confidence in using AI within daily workflows.

Human Oversight and Escalation Design

Saying "human-in-the-loop" is not enough as an AI governance policy. The real work is defining specific escalation triggers, confidence thresholds, and handoff protocols. When should AI act autonomously, and when should it bring in a human agent? What happens when AI confidence drops below a certain threshold, when customer sentiment turns sharply negative, or when the interaction involves emotionally charged interactions like a dispute, a health concern, or a complaint?

Governance must also audit the quality of AI-to-human handoffs through regular human review. It is not enough to measure whether handoffs happen. You need to know whether they happen smoothly and whether the support agent receives enough context to pick up where AI left off. The distinction between AI agents and traditional chatbots makes this especially important, since agentic AI systems handle far more complex decision chains. Clear escalation and handoff paths ensure that not every interaction is automated and that customers feel supported throughout.

Accountability and Ownership

Who owns AI governance in your contact center? If the answer is unclear, that is your first governance gap. Best practice is to establish a cross-functional governance council that brings together CX leadership, IT and engineering, legal and compliance, operations, and QA or training teams.

Each function has a distinct role. CX defines acceptable AI behaviors aligned with the organization's values. IT manages the technical controls across the AI platform and contact center software. Compliance monitors regulatory alignment. QA ensures human agents are trained for AI-augmented workflows. Without clear ownership, governance becomes everyone's concern and nobody's responsibility. Establishing this governance structure with dedicated governance bodies and clearly assigned roles enables organizations to treat governance as an ongoing operational discipline.

How to Implement AI Governance in Your Contact Center

Knowing the pillars is one thing. Putting them into practice is another. Here is a phased roadmap that works whether you are just starting with contact center AI or managing a mature, multi-channel deployment.

Phase 1: Audit Your Current AI Landscape

Start by cataloging every AI tool in operation across your contact center: chatbots, voicebots, AI agents, real time agent assist platforms, speech analytics, call routing engines, and workforce management systems. For each tool, assess three things. First, what customer data does it access? Second, what AI driven decisions does it make or influence? Third, what compliance guardrails are currently in place?

This audit will reveal gaps where AI is operating without clear governance, whether that is an AI powered chatbot with no escalation threshold or a speech analytics tool processing conversation logs without defined retention policies. Document every AI system thoroughly to establish a complete picture of how AI is being used across the organization.

Phase 2: Build a Cross-Functional Governance Team

Governance cannot live in a single department. Bring together CX leadership, IT and engineering, legal and compliance, operations, and QA. Define roles clearly: who sets AI governance policies, who monitors AI behavior, who responds to incidents, and who reports findings to executive leadership.

Establish a governance charter that includes meeting cadence, escalation paths, and decision rights. This team becomes the operating backbone of your governance program. A dedicated AI governance council should oversee policy development and monitor AI performance, ensuring that practices around AI usage align with both business needs and organizational values. By using AI monitoring dashboards alongside governance checkpoints, this council maintains visibility into how AI performs across every channel.

Phase 3: Define Trust Boundaries and Policies

With your team in place, set the rules. Define confidence thresholds for AI decision-making: at what point should a chatbot stop trying to resolve an issue and escalate to a human agent? Create transparency policies for each customer-facing channel. Document data handling rules, bias audit schedules, and incident response playbooks. Develop and train staff on AI policies that cover ethical standards to ensure AI complements rather than replaces the human touch.

Map every policy to specific contact center workflows. Chatbot containment rules, agent-assist guardrails for suggestion accuracy, speech analytics review protocols for flagged interactions. Organizations should start with clear goals to ensure AI is applied where it delivers the most value and avoid over-automation that creates friction instead of operational efficiency. Prepare agents and supervisors for AI integration by training them on how AI supports their work, setting clear workflows for feedback, and boosting agent productivity through well-defined AI-human collaboration.

Phase 4: Monitor, Measure, and Iterate

Governance is not a one-time project. Define key metrics and KPIs that tell you whether your governance program is actually working. Track bias incident rates, escalation accuracy (did the right interactions get escalated to the right agent?), compliance audit pass rates, customer satisfaction scores, and AI containment rates. Continuous testing of AI models helps organizations detect performance degradation or data drift before it impacts customer needs.

Implement continuous monitoring for AI outputs and real time insights rather than relying solely on periodic audits. Build feedback loops where both human agents and customers can flag AI issues in real time. Contact center AI platforms continuously analyze customer interactions to uncover trends, sentiment, and common issues, and your governance framework should leverage these same capabilities to refine its own effectiveness. Revisit your governance practices at least quarterly, because both AI capabilities and regulations are evolving faster than annual review cycles can keep up with. Staying current with contact center automation trends will help your governance framework evolve alongside the technology it oversees.

How AI Governance Builds Customer Experience and Trust

Governance is often framed as a cost center or a compliance burden. That framing misses the point. Well-governed AI actually drives better business outcomes and stronger customer experience across every channel.

Customers who know an organization governs its AI responsibly are more willing to engage with self service channels, including AI powered voice systems that understand intent and AI agents that handle routine inquiries without long wait times. That improves chatbot containment rates, reduces average handle time, and lowers cost-to-serve, all without sacrificing customer satisfaction. Remember that only 42% of customers trust businesses to use AI ethically. The organizations that close that customer trust gap through responsible AI governance will see measurable CX gains.

Effective governance also accelerates AI adoption. When governance frameworks promote transparency and accountability in AI operations, business leaders gain the confidence to expand contact center AI across the customer journey, from self service options and personalized interactions to predictive analytics that anticipate customer needs before they arise. AI powered contact centers can automate authentication using voice biometrics, unearth previous customer conversations through knowledge bases, and help protect confidential customer data, but only when clear governance structures ensure these AI systems operate within defined boundaries.

In regulated industries like BFSI and healthcare, governance readiness is increasingly becoming a market differentiator. When enterprise buyers evaluate contact center software vendors, governance maturity shows up in compliance audits, security assessments, and RFP scorecards. AI governance helps organizations align their AI initiatives with regulatory standards and ethical considerations, facilitating responsible use of AI at scale.

Human agents benefit from strong governance too. When the rules around AI powered tools are clear, when support agents understand how suggestions are generated and when escalation kicks in, they adopt AI with more confidence and less friction. This improves agent productivity and the overall agent experience. AI assistants that surface relevant information, automate repetitive tasks like after-call summarization, and reduce time searching through knowledge bases all perform better when governed by clear policies. Generative AI saves real money by automating manual processes, improving first-call resolution, and lowering turnover, but only when organizations embed these AI technologies into existing workflows with proper oversight. AI powered self service systems also reduce wait times for customers who prefer quick resolution, while freeing human agents to focus on complex and emotionally charged interactions. Governance does not just protect customers. It empowers the people who serve them.

What Comes Next: The Future of AI Governance in Contact Centers

Agentic AI is changing the governance equation. When AI systems can autonomously chain multiple actions, such as looking up an account, applying a credit, scheduling a callback, and sending a confirmation, governance must evolve from monitoring individual outputs to governing entire decision chains. The rules that work for a simple chatbot intent classification will not be sufficient for an AI driven system that takes ten sequential actions on a customer's behalf.

Multi-agent orchestration adds another layer. Forrester has identified 2026 as the breakthrough year for multi-agent systems, and the prediction that agentic AI will handle 80% of common service issues by 2029 means governance frameworks will need to manage interactions between AI agents and AI systems, not just between AI and humans. Standards like ISO/IEC 42001, the first AI management system standard, will become increasingly important as organizations formalize their approach to using AI responsibly.

Regulations will continue tightening. But the contact centers that build governance muscle now will not be scrambling to adapt. They will already be there. AI contact centers help businesses anticipate customer needs, reduce friction, and meet many customers where they are, but only when governed by frameworks that evolve with the technology. The organizations that treat AI governance as a living, evolving practice, rather than a one-time project, are the ones that will lead their industries in both compliance and customer experience.

Frequently Asked Questions

What tools are used for AI governance in contact centers?

Common AI tools include bias detection platforms, automated compliance monitoring systems, AI output auditing dashboards, and data lineage trackers. Many organizations also use governance frameworks like NIST AI RMF and ISO/IEC 42001 to structure their programs alongside dedicated monitoring software for contact center AI.

Can small contact centers implement AI governance effectively?

Yes. Small contact centers can start with a focused governance checklist covering data protection, escalation thresholds, and bias auditing for AI models. Frameworks like NIST AI RMF are scalable. The key is assigning clear ownership and building governance into existing workflows rather than creating a separate bureaucracy. Even with limited resources, organizations can prioritize high-impact use cases first to build momentum and prove the most value quickly.

Frequently Asked Questions (FAQs)

Take the leap towards innovation with Rezo.ai

Get started now

.jpg)